Abstract

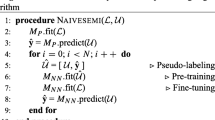

It is well known that for some tasks, labeled data sets may be hard to gather. Self-training, or pseudo-labeling, tackles the problem of having insufficient training data. In the self-training scheme, the classifier is first trained on a limited, labeled dataset, and after that it is trained on an additional, unlabeled dataset, using its own predictions as labels, provided those predictions are made with high enough confidence. Using credible interval based on MC-dropout as a confidence measure, the proposed method is able to gain substantially better results comparing to several other pseudo-labeling methods and out-performs the former state-of-the-art pseudo-labeling technique by 7\(\%\) on the MNIST dataset. In addition to learning from large and static unlabeled datasets, the suggested approach may be more suitable than others as an online learning method where the classifier keeps getting new unlabeled data. The approach may be also applicable in the recent method of pseudo-gradients for training long sequential neural networks.

D. Bank, D. Greenfeld and G. Hyams—Equally contributed authors, writers are presented by the alphabetical order.

This study was supported in part by fellowships from the Edmond J. Safra Center for Bioinformatics at Tel-Aviv University and from The Manna Center for Food Safety and Security at Tel-Aviv University.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Nigam, K., Ghani, R.: Analyzing the effectiveness and applicability of co-training. In: Proceedings of the Ninth International Conference on Information and Knowledge Management, pp. 86–93. ACM (2000)

Amini, M.-R., Gallinari, P.: Semi-supervised logistic regression. In: Proceedings of the 15th European Conference on Artificial Intelligence, ECAI 2002, pp. 390–394. IOS Press, Amsterdam (2002)

Hinton, G.E., Srivastava, N., Krizhevsky, A., Sutskever, I., Salakhutdinov, R. R.: Improving neural networks by preventing co-adaptation of feature detectors, arXiv preprint arXiv:1207.0580 (2012)

Lee, D.-H.: Pseudo-label: the simple and efficient semi-supervised learning method for deep neural networks. In: Workshop on Challenges in Representation Learning. ICML, vol. 3, p. 2 (2013)

Salimans, T., Goodfellow, I., Zaremba, W., Cheung, V., Radford, A., Chen, X.: Improved techniques for training gans. In: Advances in Neural Information Processing Systems, pp. 2234–2242 (2016)

Valpola, H.: From neural PCA to deep unsupervised learning. Advances in Independent Component Analysis and Learning Machines, pp. 143–171 (2015)

Rasmus, A., Berglund, M., Honkala, M., Valpola, H., Raiko, T.: Semi-supervised learning with ladder networks. In: Cortes, C., Lawrence, N.D., Lee, D.D., Sugiyama, M., Garnett, R. (eds.) Advances in Neural Information Processing Systems, vol. 28, pp. 3546–3554. Curran Associates, Inc. (2015)

Pereyra, G., Tucker, G., Chorowski, J., Kaiser, Ł., Hinton, G.: Regularizing neural networks by penalizing confident output distributions, arXiv preprint arXiv:1701.06548 (2017)

Gal, Y., Ghahramani, Z.: Dropout as a Bayesian approximation: insights and applications. In: Deep Learning Workshop, ICML (2015)

Kuncheva, L.I., Whitaker, C.J., Shipp, C.A., Duin, R.P.: Limits on the majority vote accuracy in classifier fusion. Pattern Anal. Appl. 6(1), 22–31 (2003)

Grandvalet, Y., Bengio, Y.: Semi-supervised learning by entropy minimization. In: Advances in Neural Information Processing Systems, pp. 529–536 (2005)

Pitelis, N., Russell, C., Agapito, L.: Semi-supervised learning using an unsupervised atlas. In: Joint European Conference on Machine Learning and Knowledge Discovery in Databases, pp. 565–580. Springer (2014)

Hariharan, B., Girshick, R.: Low-shot visual recognition by shrinking and hallucinating features, arXiv preprint arXiv:1606.02819 (2016)

Bulo, S.R., Neuhold, G., Kontschieder, P.: Loss max-pooling for semantic image segmentation, arXiv preprint arXiv:1704.02966 (2017)

Arthur, D., Vassilvitskii, S.: k-means++: the advantages of careful seeding. In: Proceedings of the Eighteenth Annual ACM-SIAM Symposium on Discrete Algorithms. Society for Industrial and Applied Mathematics, pp. 1027–1035 (2007)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2019 Springer Nature Switzerland AG

About this paper

Cite this paper

Bank, D., Greenfeld, D., Hyams, G. (2019). Improved Training for Self Training by Confidence Assessments. In: Arai, K., Kapoor, S., Bhatia, R. (eds) Intelligent Computing. SAI 2018. Advances in Intelligent Systems and Computing, vol 858. Springer, Cham. https://doi.org/10.1007/978-3-030-01174-1_13

Download citation

DOI: https://doi.org/10.1007/978-3-030-01174-1_13

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-01173-4

Online ISBN: 978-3-030-01174-1

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)